Concepts

How evaluation works in Respan: scores, graders, evaluators, datasets, and experiments.

How evaluation works in Respan: scores, graders, evaluators, datasets, and experiments.

Evaluation in Respan lets you measure and improve the quality of your LLM and agent outputs. The system supports two modes:

A score is the output of an evaluation. It is attached to a specific span and represents a quality assessment for that LLM request.

Numerical scores are best for ratings and quality metrics. The range is defined by the grader’s min_score and max_score.

Boolean scores work for pass/fail evaluations like content safety checks.

Categorical scores handle multi-choice classifications from predefined choices.

Comment scores capture qualitative feedback as free-form text.

log_id: links the score to its spanevaluator_id or evaluator_slug: identifies which evaluator produced the scoreis_passed: whether the evaluation passed defined criteriaEach evaluator can only produce one score per span.

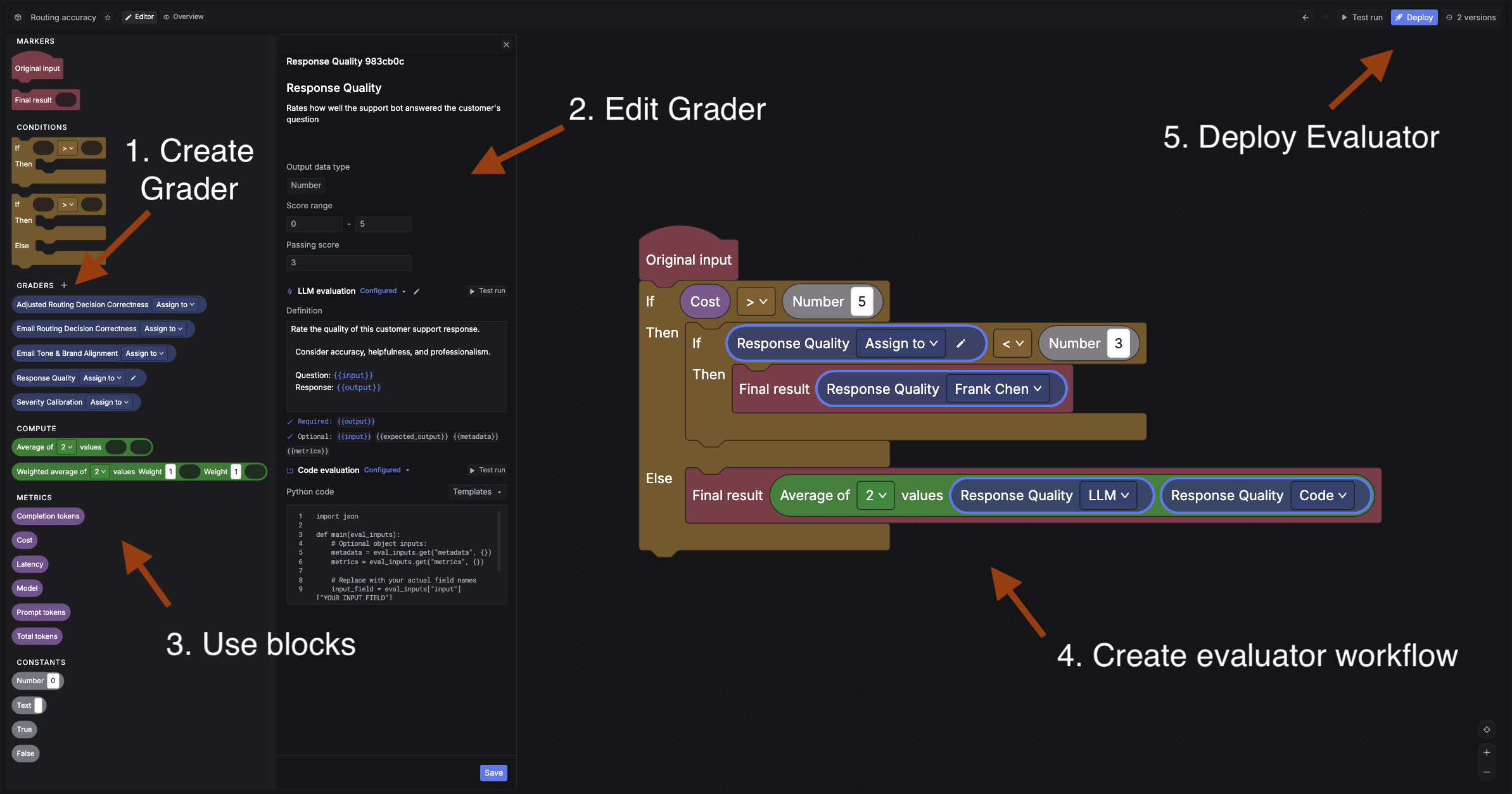

An evaluator is a workflow that scores your outputs. It is built from graders and connected with conditions using a visual block builder.

A grader is the scoring unit inside an evaluator. It defines what to measure and how to score it. Respan supports three grader types:

LLM grader: a language model judges the output based on a definition you write. The definition must include {{output}} and can reference {{input}}, {{expected_output}}, {{metadata}}, and {{metrics}}. You configure the judge model, temperature, and scoring rubric.

Code grader: a Python function scores the output programmatically. You write a main(eval_inputs) function that returns a score. Use this for format validation, length constraints, regex matching, or any deterministic check.

Human grader: your team reviews and scores outputs manually. Often paired with categorical or comment scores. The grader description helps annotators understand the scoring criteria.

A single grader can include all LLM and code definitions. During a run, Respan uses whichever config matches the evaluation mode.

A simple evaluator connects: Original input -> LLM grader -> Final result.

A more advanced evaluator can add conditions and multiple graders. For example: run an LLM grader first, and if the score is below 3, route to a human grader for manual review. You can also add compute blocks (averages, weighted scores), metrics blocks (latency, cost), and constants.

Evaluators are versioned. You can test, deploy, and roll back to previous versions.

See Evaluators for setup instructions and the full block builder reference.

A dataset is a collection of test inputs for your experiments. Each row represents one test case. You can build datasets in two ways:

From production data: sample spans from your traced data using filters. Go to Datasets, click Create dataset, and use Insert by sampling to pull spans matching your criteria.

From CSV: upload a CSV file with columns matching your prompt variables and an optional ideal_output column. Use Insert from CSV and map the fields.

Datasets can include both the input variables for your prompt and expected outputs for comparison during evaluation.

See Datasets for details.

An experiment is an offline evaluation run. It generates outputs by running every row in your dataset through a prompt or model, then runs the evaluator workflow over each output to produce scores, and aggregates the results.

You can use experiments to:

Results show per-row scores and aggregate metrics so you can pick the best configuration before deploying.

See Experiments for the full guide.

Online evaluation runs evaluators on live production traffic. Instead of building a dataset and running experiments manually, you attach evaluators to incoming spans and score them automatically as they arrive.

Use online evals to:

See Online evals for setup.