Use the gateway

Call 250+ LLMs through a unified API with automatic tracing, fallbacks, and caching.

Call 250+ LLMs through a unified API with automatic tracing, fallbacks, and caching.

Add the Docs MCP to your AI coding tool to get help building with Respan. No API key needed.

Respan’s AI Gateway is a gateway that lets you interface with 250+ large language models (LLMs) via one unified API.

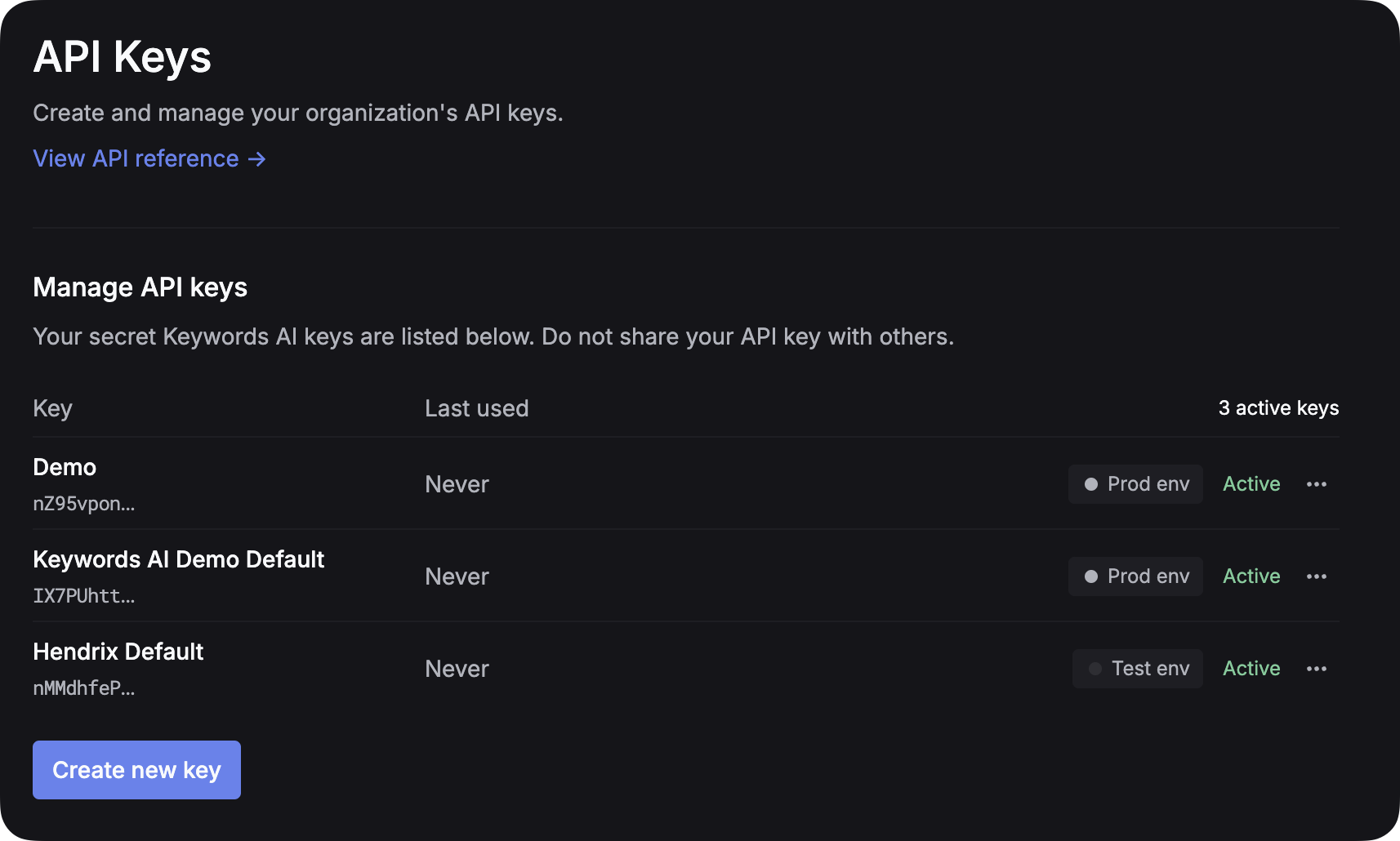

After you create an account on Respan, you can get your API key from the API keys page.

Environment Management: To separate test and production environments, create separate API keys for each environment instead of using an env parameter. This approach provides better security and clearer separation between your development and production workflows.

Point any LLM SDK at https://api.respan.ai/api/ and use your Respan API key.

Now that you can make a basic call:

customer_identifier, metadata (custom properties), and the three ways to send platform-specific params.Browse available models on the Models page.

Thinking mode allows supported models to show their reasoning process before providing the final answer.

Choose models that support thinking like gpt-5, claude-sonnet-4-20250514. See Log content types for details on the response structure.

Pass images using image_url content blocks or via prompt variables.

Prompt caching stores the model’s intermediate computation state. The model generates diverse responses while saving computational costs, as it doesn’t need to reprocess the entire prompt from scratch.