Respan caching

This page covers Respan’s response caching — storing and reusing exact request/response pairs. For provider-level prompt caching (Anthropic), see Prompt caching.

Caches save and reuse exact LLM requests. Enable caches to reduce LLM costs and improve response times.

- Reduce latency: Serve stored responses instantly, eliminating repeated API calls.

- Save costs: Minimize expenses by reusing cached responses.

Turn on caches by setting cache_enabled to true. We will cache the whole conversation, including the system message, user message and the response.

OpenAI Python SDK

OpenAI TypeScript SDK

Standard API

Cache parameters

Enable or disable caches.

Time-to-live (TTL) for the cache in seconds.

Cache behavior options.

View caches

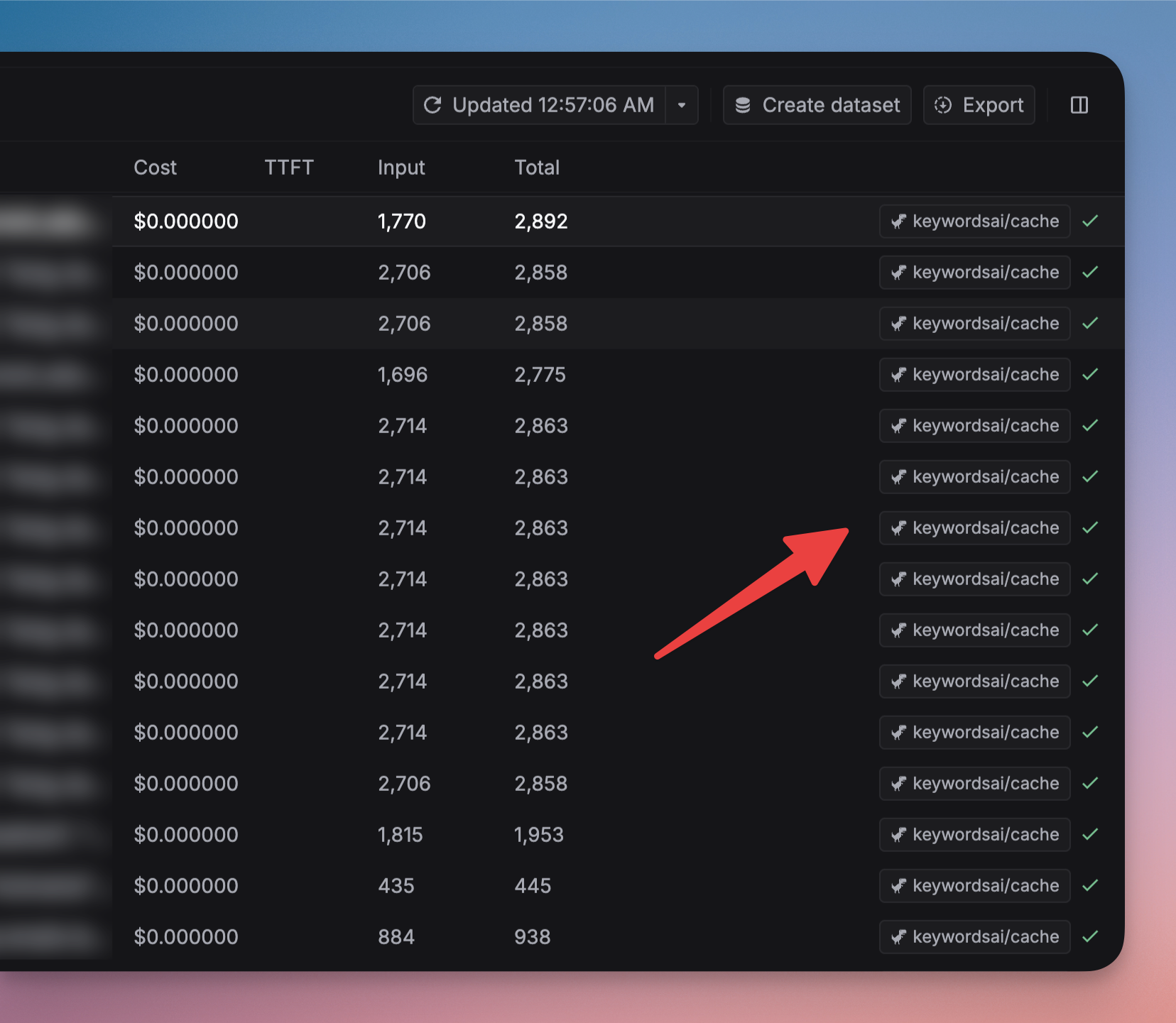

You can view the caches on the Logs page. The model tag will be respan/cache. You can also filter the logs by the Cache hit field.

Omit logs when cache hit

Set the omit_logs parameter to true or go to Caches in Settings. This won’t generate a new LLM log when the cache is hit.