Reliability

For the complete list of all request parameters, see API reference.

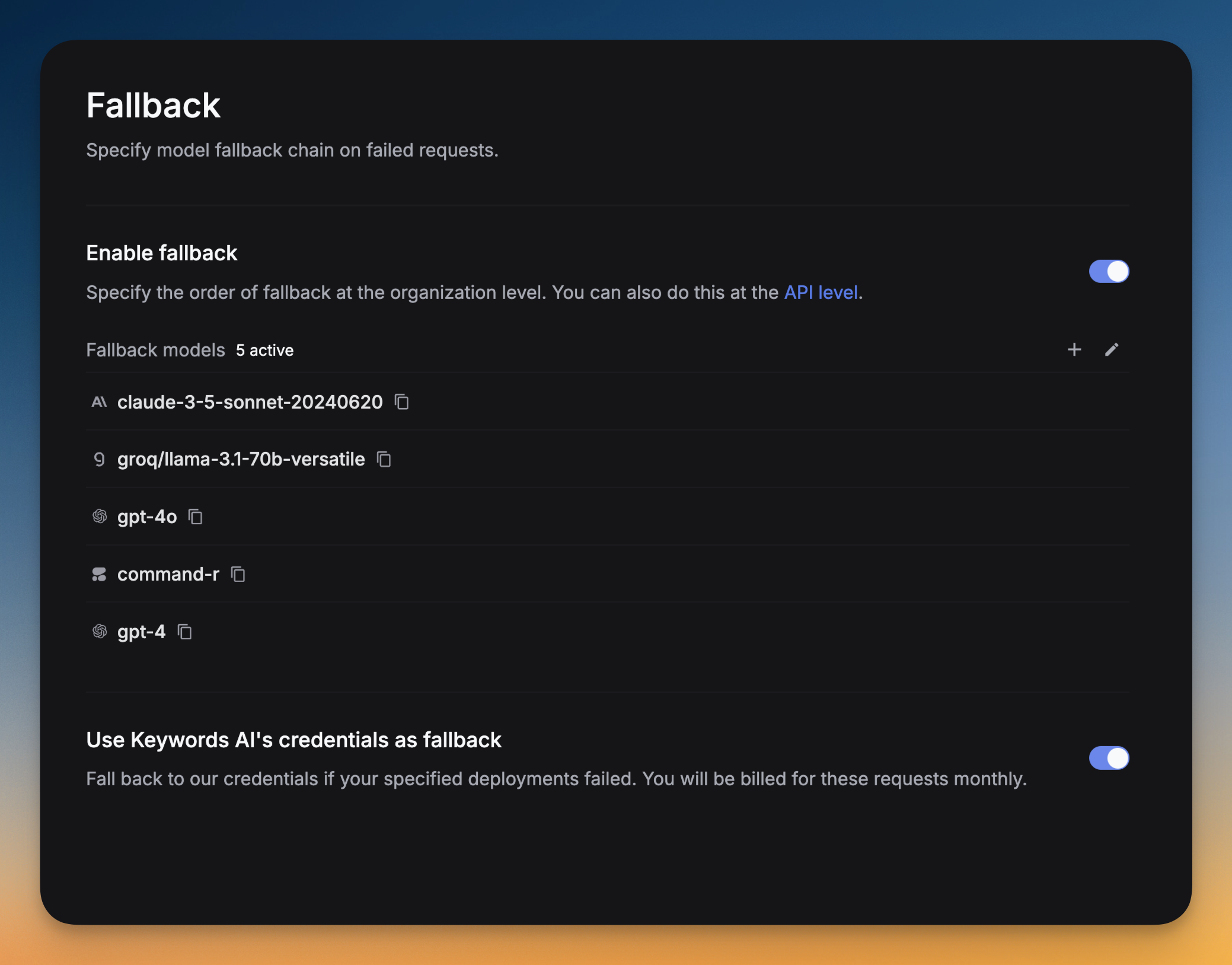

Fallback models

Respan catches any errors occurring in a request and falls back to the list of models you specified in the fallback_models field. This is useful to avoid downtime and ensure availability.

Via UI

OpenAI Python SDK

OpenAI TypeScript SDK

Standard API

Go to Settings -> Fallback -> Click on Add fallback models -> Select the models you want to add as fallbacks.

You can drag and drop the models to reorder them. The order of the models in the list is the order in which they will be tried.

Load balancing

Load balancing allows you to balance the request load across different deployments. You can specify weights for each deployment based on their rate limit and your preference.

Load balancing between models

Add models

Click Add model to add models and specify the weight for each model and add your own credentials.

Load balancing between deployments

A deployment basically means a credential. If you add an OpenAI API key, you have one deployment. If you add 2 OpenAI API keys, you have 2 deployments.

You can go to the platform and add multiple deployments for the same provider, specifying load balancing weights for each deployment.

You can also load balance between deployments in your codebase using the customer_credentials field:

Specify available models

You can specify the available models for load balancing. For example, if you only want to use gpt-3.5-turbo in an OpenAI deployment, specify it in the available_models field or do it in the platform.

Learn more about how to specify available models in the platform here.

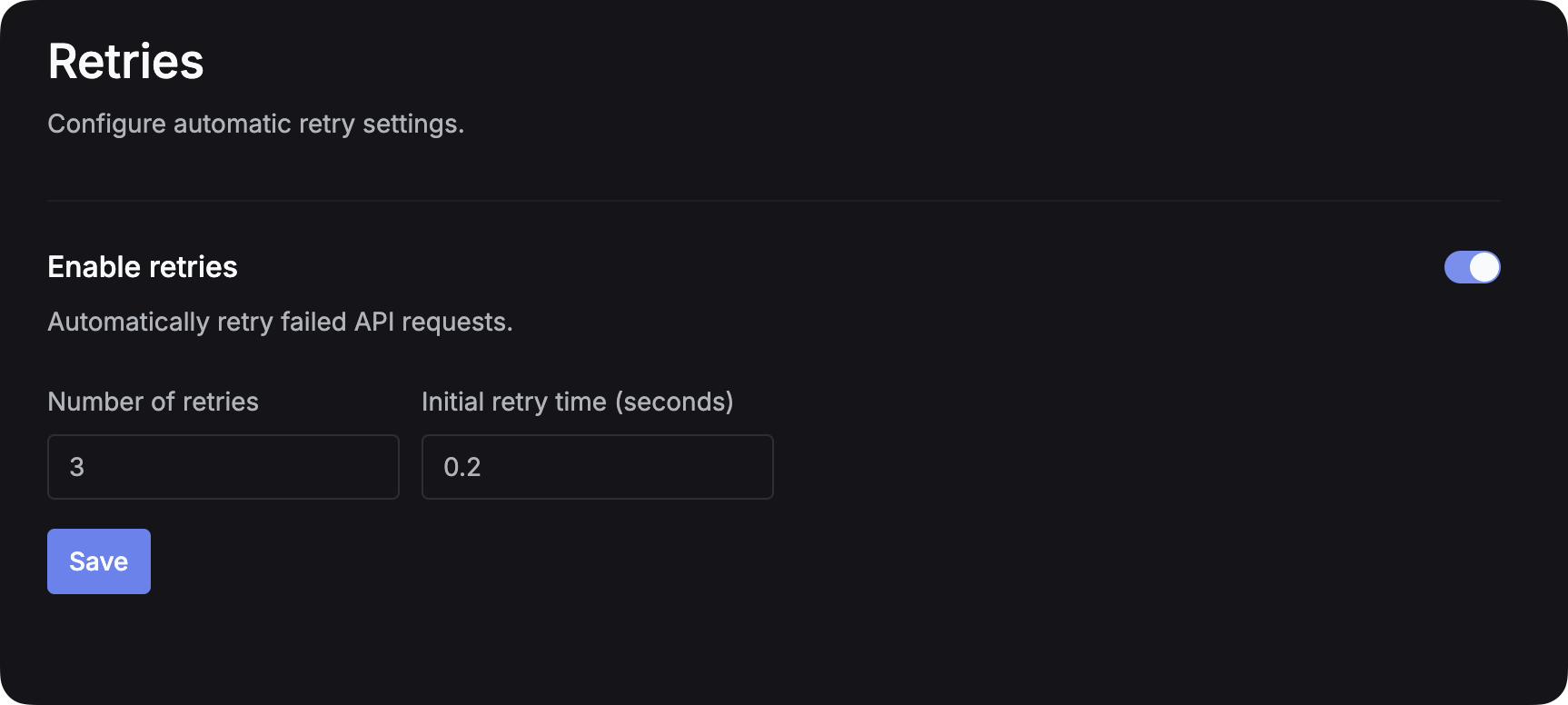

Retries

When an LLM call fails, the system detects the error and retries the request to prevent failovers.

Via UI

Via code

Go to the Retries page and enable retries and set the number of retries and the initial retry time.

Supported parameters

Automatic retry logic

Respan will automatically retry failed requests if the failure is a rate limit issue from the upstream provider:

Inline router (models)

Pass an inline list of candidate models per request and let the LLM router pick one. This is the request-time alternative to a pre-configured load_balance_group.

The selected model is recorded on the log under model.

Per-model credential override (credential_override)

Override credentials for a specific model on a single request. More granular than customer_credentials (which applies per provider). Useful when one model in a fallback chain needs different credentials than the rest:

The key is the full model slug (azure/gpt-4o, vertex_ai/claude-sonnet-4-5@20250929, etc.). Each fallback attempt resolves credentials per-model.