Why we rebuilt the agent

Our v1 agent was a router-of-specialists: a top-level dispatcher that handed each user message to one of several domain-specialist agents (logs, evaluators, automations, etc.). It worked, but it had three structural problems.

1. Context collapses at every handoff. Multi-agent systems sound powerful, but in practice they break context in subtle ways. When sub-agents are wrapped as tools, the router only sees a compressed summary of what the specialist returns—not the full conversation, reasoning, or intermediate decisions. Each handoff becomes a lossy compression step, where agents only receive the surface-level output from one another rather than the full internal state. Important context isn't necessarily gone, but it's flattened—details like subtle constraints, partial intent, or how a conclusion was reached are no longer visible. Because each sub-agent operates in isolation, it lacks awareness of the broader execution flow—what led to this step, what tradeoffs were considered, or what assumptions were made earlier. The router, in turn, has to make decisions based on these shallow summaries, which degrades overall quality. This shows up clearly in UX: for example, a creation request might produce a stream of tool calls followed by "I've created it," but without the reasoning in between. That missing "inner loop"—how the system interpreted the request and why it took those steps—is critical for trust, and it disappears across agent handoffs.

2. Multi-agent was over-engineering for today's frontier models. The reflex to split work across specialists made sense when models were weaker — narrow each agent's surface, keep it on-rails, make sure each piece does its small job correctly. But for product AI on a current-gen model, the agent is already capable enough to handle the whole task end-to-end. What it actually needs is full context, not isolation; structuring the work around the model's limits is now structuring around a limit that's mostly gone. (We saw this directly during the rewrite — there's a section on the surprising emergent behavior below.)

3. Five prompts, five blast radii. The router carried its own ~10K-char prompt, and each of the four specialists carried a ~50K-char domain prompt. Adding a new tool meant editing the router (so it knew which specialist to route to), the specialist (to teach the new tool), and the routing rules. A typo in any one of those five surfaces shipped a regression.

v2 fixes all three with one architectural change: single agent, single context, SDK-native two-tier tool loading (21 core tools always loaded + ~70 deferred tools fetched on demand via tool_search), tool-namespace grouping for argument accuracy, and task_create / task_update for self-organization on multi-step work. No router. No specialists. No handoff.

The same model and the same generation settings as v1 — just one prompt instead of five, one context instead of five, and a much cleaner reasoning surface.

How we measured "is v2 actually better?"

We built a regression net using our own product. Every run of the test slate dogfoods the exact same flow a customer uses: dataset → evaluator pipeline → experiment → score.

Three evaluators, all judging on a 1–100 scale and claude-haiku-4-5 as the default judge:

- Helpfulness & Completeness — does the final assistant message actually answer the user's question? Did the agent take the action when asked, or stop at "Want me to proceed?" Caps at 40 if the agent stalled at confirmation when the user clearly asked for an action.

- Tool-Use Efficiency — count function_call items, classify question complexity (pure-knowledge / simple / medium / complex), penalize redundancy (duplicate creates, scratchpad spam, repeat list calls without filter changes). Rewards direct paths.

- Hallucination & Grounding — extract every concrete claim from the final message (numbers, IDs, named resources), then check whether each one is traceable to an earlier function_call_output. Heavy penalty for invented UUIDs or numbers that don't appear in any tool result.

A note on the 1–100 scale: it's strictness-anchored, not a quality percentage. The rubric only awards 80+ when every must-have from the ground truth is hit, and caps at 40 when the agent stops at "Want me to proceed?" instead of acting. Most decent agent runs land in 40–70 — that's the band where the answer is useful but missing one specific. What matters in this kind of comparison isn't the absolute number; it's whether the same rubric, applied to two agents on the same questions, ranks them consistently and shows direction. Spoiler: it does, and the same direction holds when we re-score with a stricter judge as a sanity check.

The internal regression net

The first layer of the slate is a fixed set of internal probes — same questions every time we change the agent prompt. They're written to cover the core tools and functionalities the agent has to get right (taxonomy of evaluators / graders / pipelines, ID asymmetries, multi-step create/update flows, automation and monitor composition) — the surfaces customers depend on most. Locking those into a regression net is how we keep the agent sustainable over many prompt iterations: a future prompt edit can't silently break the things the platform is built around, because the slate would catch it before we ship.

The probes span four categories, each targeting a different class of bug:

Taxonomy (I1–I6)

Does the agent keep evaluator vs grader vs pipeline straight? Does it know that passing workflow_id (family ID) to experiment_create returns 404, and id (version PK) is the right one?

Example:

"I tried evaluator_workflow_ids: [<two valid-looking UUIDs>] and got WorkflowVersions not found. What did I do wrong?"

The expected answer: identify that the user passed family IDs instead of version PKs, verify by listing pipelines, and return the correct version IDs.

Actions (A1–A6)

Can the agent execute multi-step create/update flows end-to-end?

Example:

"Create a 'Conciseness 1-100' evaluator … make sure it shows up on the Evaluators page when you're done."

The expected flow is evaluator_create → evaluator_run → evaluator_commit → evaluation_pipeline_create — skipping the last step is the most common failure mode (you create a grader but never wrap it, so nothing shows on the UI).

Cross-domain (CD1–CD3)

Does the same ID-discipline transfer across domains?

Example:

"Set up an automation that runs my Hallucination evaluator on every failed request log and Slack-alerts me if the score drops below 70."

The pipeline ID lives inside an automation task config, the notification_method_id is a foreign key, and the agent has to find an existing Slack target instead of inventing one.

Automation / Monitor (M1–M6)

Multi-task workflow chains, dotpath fluency, honest-failure probes.

Example:

"Create an automation that runs my evaluator every Monday at 9am ET."

The honest answer is "Respan workflows trigger on events, not on a cron schedule — the closest options are X or Y." A grounding-violating agent silently invents a "scheduled" trigger type.

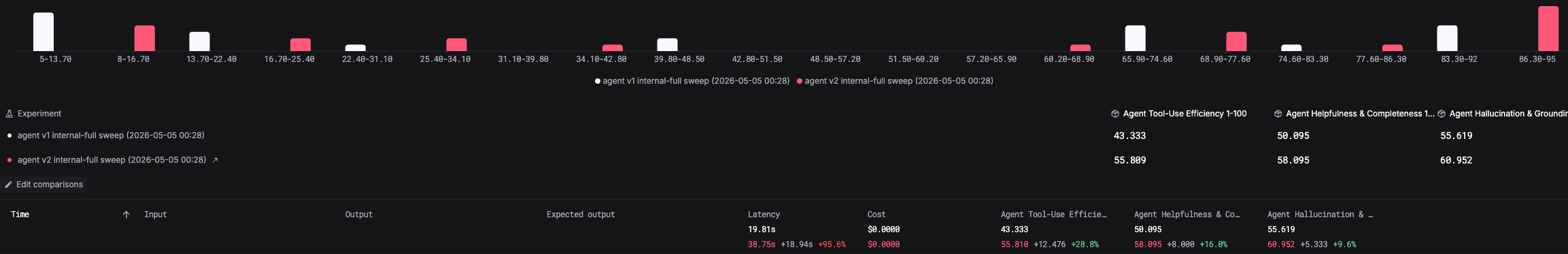

The data: v2 wins on every dimension

The Respan experiment-comparison view for the internal slate, scored by the default judge (claude-haiku-4-5):

| Dimension | v1 | v2 | Δ |

|---|---|---|---|

| Tool-Use Efficiency | 43.3 | 55.8 | +12.5 (+28.8%) |

| Helpfulness & Completeness | 50.1 | 58.1 | +8.0 (+16.0%) |

| Hallucination & Grounding | 55.6 | 61.0 | +5.3 (+9.6%) |

| Latency (per turn) | 19.8s | 38.8s | +18.9s |

v2 is slower per turn because it actually completes the action end-to-end; v1's "fast" responses were mostly stops at "Want me to proceed?".

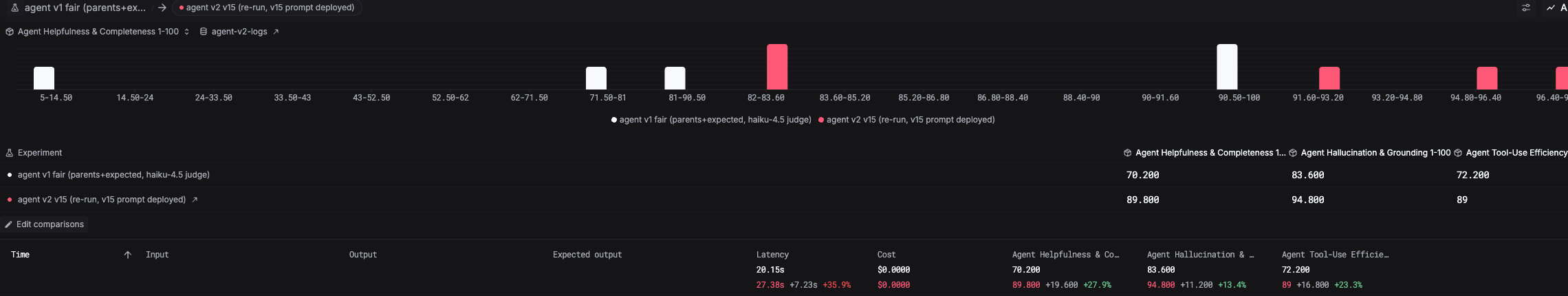

Real customer questions (out-of-distribution check)

The internal regression net guarantees we don't regress on known failure modes — but it doesn't tell us whether the improvements generalize to questions we haven't pre-anticipated. That's what the second layer is for: a smaller set of real customer questions paraphrased from our support inbox, deliberately kept out of the regression net so they stay novel.

Examples:

- "What's my current monthly request volume and how is it trending?"

- "I want to set up an automation: every time a request fails, I want it added to my Failed Requests dataset."

- "My error rate jumped this morning — find me the failing logs and tell me what's going wrong."

These cross multiple domains, embed soft constraints ("compare this week to last week"), and ask for actions to actually be performed — exactly the shapes the internal slate is meant to be a proxy for.

The data: improvements transfer

On these out-of-distribution questions, v2 follows the same pattern as the internal slate: it actually finishes the multi-step actions (creates the automation, runs the experiment, returns concrete numbers and IDs traceable to tool output). The Helpfulness & Completeness gain on this set is the most visible — because the consequence of stopping at confirmation on a real customer question is "the customer didn't get what they asked for." The internal slate's improvements transfer to questions the slate has never seen.

That's the case for shipping v2. The regression net is intact, the cross-judge ranking holds, and the gains generalize to real customer language.

What changed inside v2

- One prompt instead of five. The router-and-specialist split was a coordination tax we no longer pay. The whole agent is one prompt — same model, same settings, way less total prompt mass.

- SDK-native two-tier tool loading. v2 loads 21 core tools at startup (the most-used surface: log_, dataset_, experiment_*, etc.) and defers ~70 long-tail tools behind tool_search. The agent fetches the schema only when it needs it. Same full coverage as v1, much smaller resting context.

- Tool-namespace grouping for argument accuracy. Tools are grouped by domain (evaluators, workflows, datasets, prompts) and the agent picks the namespace before the tool — which gave us measurable gains on argument shape correctness, especially on the ID-asymmetry traps (passing id vs workflow_id to the right field).

- Task tools for self-organization. task_create / task_update give the agent a place to plan multi-step work visibly instead of carrying everything in reasoning tokens. (More on the user-facing version of this below.)

- Hard rules on ID asymmetry. "Version PK is what experiment_create wants, not the family ID" lives in the prompt now, with a pointer to the diagnose-not-retry pattern when an error mentions WorkflowVersions not found.

- List-no-match means ask, not invent. When notification_method_list returns nothing matching the user's described Slack channel, v2 asks the user instead of silently creating one.

- Async-aware. Long-running operations (experiments, dataset imports) get launched and reported as "running async" instead of polled in the same turn — avoiding a class of "the agent says it's done but it's actually still running" bugs.

The numbers say the bet paid off. The slate is also now a regression net we run against every prompt iteration — so we'll know the moment a future change starts costing us on any of these dimensions.

The unplanned magic: the loop reads the docs on its own

The first thing we noticed — back when v2's prompt was still a rough draft we hadn't finished editing — was that the agent did something we never told it to do.

When v2 hit a request it didn't fully understand, or got an unexpected error from a tool, it would call docs_search on its own, multiple times, to verify the current schema, double-check which ID type a field expected, and figure out why a tool had returned an error. None of this was prompted — the rough draft didn't say "look up the docs when you're unsure." The single-agent loop just gave the model the room to do it.

This is the part of the architecture rewrite we hadn't priced in. v1's router-and-specialist setup didn't have this option: when a specialist hit an unfamiliar error, it returned a string to the router, which had to act on the summary without re-entering the loop. v2's loop can take an error message, search the docs, retry with the right shape, and only then turn back to the user — all in one pass, without losing context. Diagnose-then-retry stopped being a behavior we had to engineer and became a behavior the architecture allowed.

The lesson: a clean loop with full context isn't just "a tidier engineering surface." It changes what the model is capable of doing, because every recovery path the model wants to take is now available without losing state.

What's next

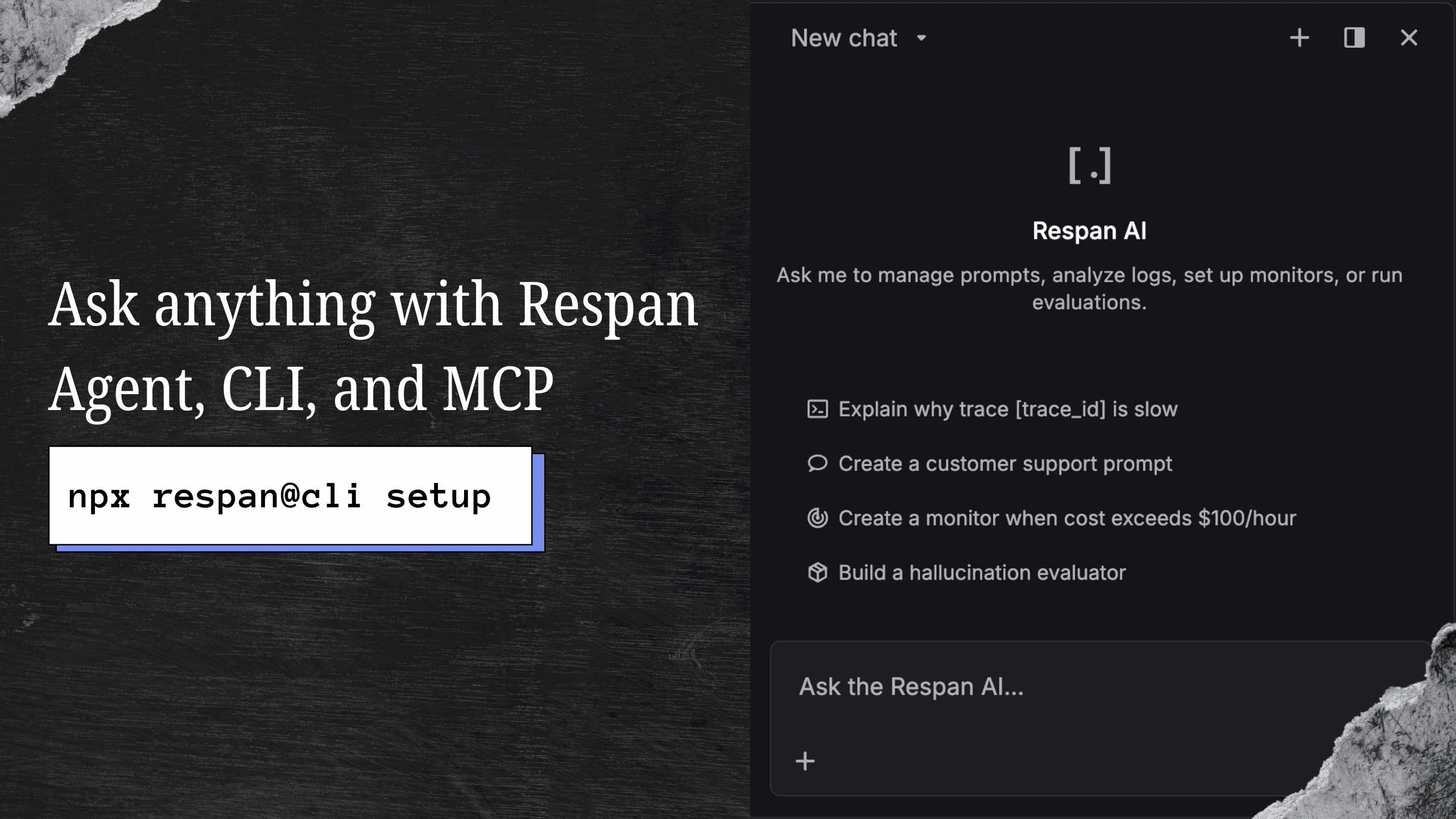

The biggest gap right now is making the agent's process visible to the user. v2's loop is doing more interesting work than the chat UI currently shows — and the user only sees the wall of text at the end. Three improvements coming next, all in the same direction:

- A live plan checklist. v2 already uses task_create / task_update internally to structure multi-step work, but those calls aren't surfaced in the chat UI yet. The next iteration promotes them to first-class deferred tools that render as a live checklist in the conversation: the agent commits to a plan up-front, ticks off steps as it executes them, and the user sees exactly what's happening in real time.

- Inline tool outputs. Right now a tool call shows up as a one-line "called X" pill. We're going to surface a structured, expandable view of each tool's actual return value — the dataset row that came back, the count of failed logs, the exact UUID the agent looked up — so the user can see the same evidence the agent is reasoning over instead of trusting a final summary.

- Visible reasoning blocks. v2's loop emits reasoning items between tool calls (the agent's internal thinking about what to do next). We collapse those by default today; the next version makes them a togglable detail under each step so a user who wants to audit the agent's logic — "why did you decide to use the duplicate workflow?" — can read the actual chain instead of guessing.

Combined with better token-level streaming for prose and tool-level streaming for actions, the agent will feel less like a black box that returns a wall of text and more like a teammate working through a transparent checklist.

That same surface is also a regression-test goldmine — once plans, tool outputs, and reasoning are all rendered first-class, every "the agent did the wrong thing" report is reproducible from the saved trace, and we can replay the failure deterministically against any future prompt.

Going deeper on log-investigation questions

The other gap is depth on investigative questions. When a user asks "why did my error rate spike this morning?" or "which requests are slow today?", v2 today answers with the right counts and the right time bucket — and stops there. That's a triage starting point, not an answer.

The next iteration teaches the agent to drill when the question is investigative: open the failing logs, read the actual error messages, walk the trace tree, group by error class, and surface concrete root causes ("three of the five failures were rate-limit errors from Anthropic on claude-sonnet-4-6; the other two were malformed prompts missing the messages field") instead of stopping at "5 logs failed." High-level analytics are how you decide whether to investigate; once a user has decided, they want the actual investigation — and the agent should know the difference.