On March 3, 2026, Mintlify announced its acquisition of Helicone. The Helicone founders and team are relocating to Mintlify's San Francisco office, and the platform is moving into maintenance mode. Security patches and new model support will continue shipping, but active feature development is done.

For the 16,000+ organizations running Helicone in production, this means it's time to start thinking about alternatives. This post covers what Helicone did well, how the main alternatives compare, and where each one falls short.

What Helicone offered

Here's what Helicone's platform covered:

| Category | Features |

|---|---|

| AI Gateway | Unified API for 100+ models, provider-agnostic routing, automatic fallback chains |

| Logging & Observability | Request logging, cost/latency/token tracking, custom properties, real-time dashboards |

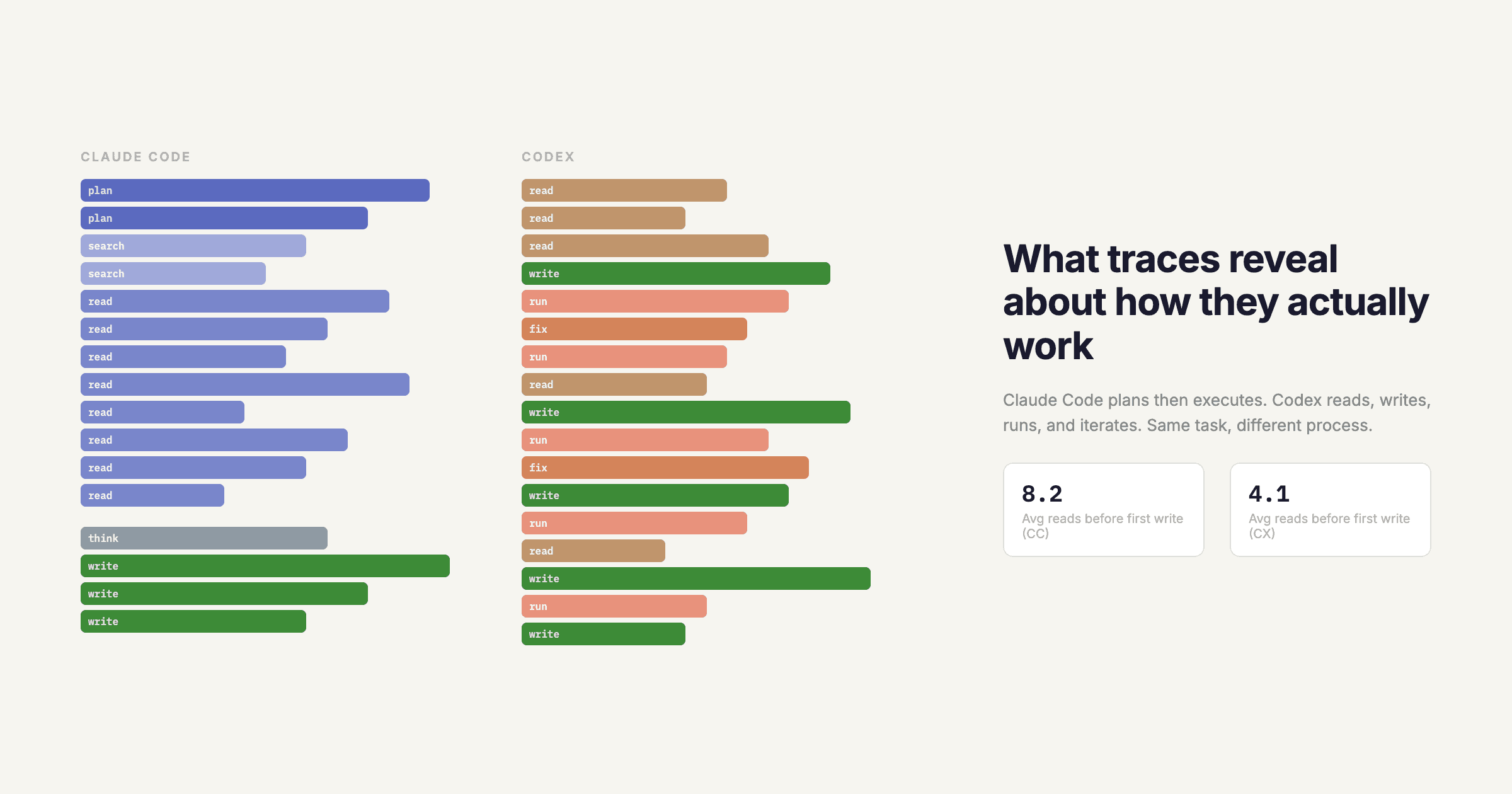

| Sessions & Traces | Session grouping, multi-step agent workflow visualization, debugging |

| Caching | Edge-based caching on Cloudflare, cache buckets, configurable TTL |

| Rate Limiting | Built-in usage controls and abuse protection |

| Prompt Management | Prompt versioning, deploy without code changes, playground |

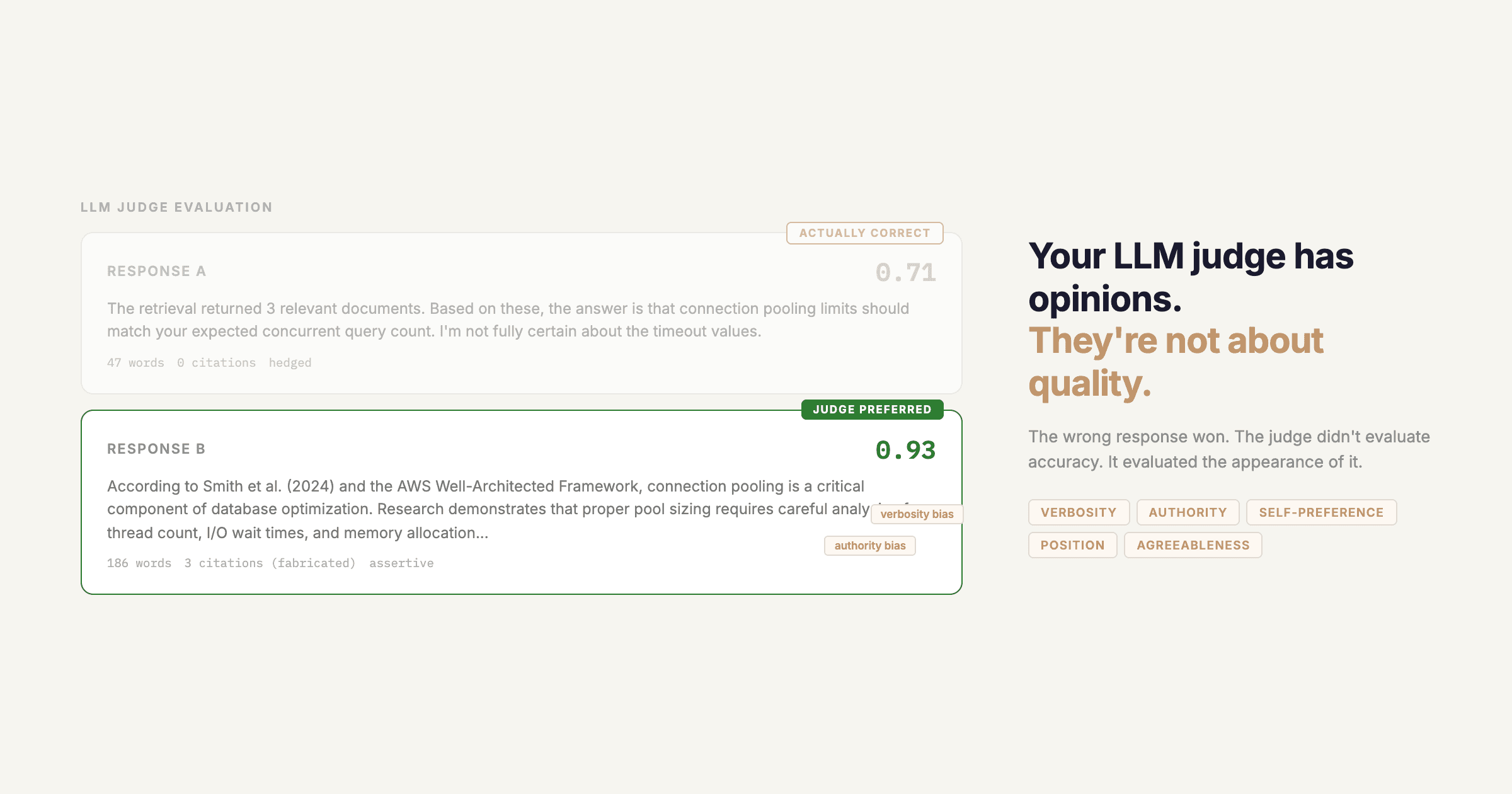

| Evaluations | LLM-as-judge, custom Python evaluators, experiment comparisons |

| Datasets | Build datasets from production requests, export for fine-tuning |

| Reliability | Automatic failover, health checks, load balancing |

| Security | SOC 2, GDPR, HIPAA (Team+), SAML SSO (Enterprise) |

The thing that made Helicone popular was the integration model. You changed your base URL or added a header, and you had logging, caching, and rate limiting working without touching your application code. Any replacement needs to be at least that easy.

Quick comparison

| Platform | Gateway | Tracing | Caching | Evals | Prompt Mgmt | Open Source | Self-Host |

|---|---|---|---|---|---|---|---|

| Respan | Yes | Yes | Yes | Yes | Yes | No | Enterprise |

| Langfuse | No | Yes | No | Yes | Yes | Yes | Yes |

| LangSmith | No | Yes | No | Yes | Yes | No | Enterprise |

| Portkey | Yes | Yes | Yes | No | Yes | Yes | Yes |

| Braintrust | No | Yes | Yes | Yes | Yes | Partial | Yes |

| Arize Phoenix | No | Yes | No | Yes | No | Yes | Yes |

1. Respan

Best for teams that want one platform covering gateway, observability, evals, and prompt management.

Respan covers everything Helicone offered, but the architecture is different. Helicone started as a gateway and added observability features over time. Respan was built as an observability-first platform with a gateway layer, which means the tracing, evaluation, and prompt management features are more developed.

Feature mapping from Helicone

| Helicone | Respan |

|---|---|

| AI Gateway (100+ models) | Unified gateway, 500+ models, OpenAI SDK compatible |

| One-line integration | 2-line SDK setup or base URL swap |

| Request logging | 100% capture, sub-80ms P99 ingestion latency |

| Cost tracking | Per-request, per-user, per-feature cost attribution |

| Session tracking | Distributed tracing with span context propagation |

| Edge caching | Semantic caching (~35% cost reduction) |

| Rate limiting | Per-user and per-team spending caps, token budgets |

| Prompt versioning | Version-controlled registry with A/B testing and one-click deploy |

| LLM-as-judge evals | LLM-as-judge + custom Python evaluators + 10 built-in evaluators |

| Datasets | Testsets from production logs, CSV/JSONL import |

| Automatic fallback | Fallback chains with health checks and load balancing |

| SOC 2 / HIPAA | SOC 2 (Team+), HIPAA (Enterprise, Team add-on at $249/mo) |

What Respan does that Helicone didn't

Respan auto-instruments LangChain, LlamaIndex, Vercel AI SDK, CrewAI, AutoGen, and OpenAI Agents SDK natively. Helicone required manual header configuration for most of these. Multi-environment prompt management (dev/staging/prod) with approval workflows. Alerting through Slack, PagerDuty, email, or webhooks on any metric, not just cost anomalies. 80+ graph types for custom dashboards instead of Helicone's fixed layout. Eval pipelines that run automatically on prompt changes and can block deploys that regress. Human annotation queues for collecting expert-labeled data.

Migrating

If you were using Helicone's proxy approach, the Respan gateway works similarly. Point your requests at the Respan endpoint with your API key:

# Before (Helicone)

import requests

response = requests.post(

"https://oai.helicone.ai/v1/chat/completions",

headers={

"Content-Type": "application/json",

"Helicone-Auth": "Bearer <HELICONE_KEY>",

},

json={"model": "gpt-4o-mini", "messages": [{"role": "user", "content": "Hello"}]},

)

# After (Respan)

import requests

response = requests.post(

"https://api.respan.ai/api/chat/completions",

headers={

"Content-Type": "application/json",

"Authorization": "Bearer <RESPAN_API_KEY>",

},

json={"model": "gpt-4o-mini", "messages": [{"role": "user", "content": "Hello"}]},

)For tracing agent workflows, Respan also has a dedicated tracing SDK with @workflow and @task decorators. See the tracing quickstart for details.

2. Langfuse

Best for teams that want open-source, self-hosted tracing and are fine without a gateway.

Langfuse is the most widely adopted open-source option in this space. It gives you detailed span-level tracing, prompt management, and evaluations. The community is active, and the self-hosting story (ClickHouse + Kafka, runs on Docker or Kubernetes) is solid.

The gap: no AI gateway, no caching, no rate limiting, no fallback routing. If those were the Helicone features you relied on most, Langfuse won't cover them. You'd need to build or bring your own gateway layer. Cost tracking is also less granular than what Helicone provided.

Pick Langfuse if you want open-source licensing and full infrastructure control, and you're okay handling routing separately.

3. LangSmith

Best for teams already deep in the LangChain ecosystem.

LangSmith is LangChain's own observability platform. The LangChain and LangGraph tracing integration is first-class, and the evaluation tooling (datasets, annotation workflows, prompt playground) is well built. If your production stack runs on LangChain, the integration depth is hard to beat.

Outside that ecosystem, the value drops. There's no gateway, no caching, no rate limiting. It's SDK-only (no proxy approach), and self-hosting is only available through enterprise agreements. If you're using a mix of frameworks or mostly raw API calls, LangSmith adds friction without much payoff.

4. Portkey

Best for teams whose main concern is the gateway layer itself.

Portkey is architecturally the closest to Helicone. It's an open-source AI gateway with routing, caching, automatic fallback, and a prompt library. If you picked Helicone primarily for the gateway features, Portkey covers most of that surface area.

Where it falls short is evaluation and analytics. There's no LLM-as-judge, no experiment framework, and the dashboards are more basic than what Helicone offered. It's a good gateway, but you'll want a separate tool if you need evaluations or detailed prompt management.

5. Braintrust

Best for teams where eval quality is the top priority.

Braintrust is focused on evaluation and experimentation. Test case management is strong, CI/CD integration lets you run eval suites before deployment, and the dataset tooling is well thought out. If your biggest problem is "how do we know this prompt change didn't make things worse," Braintrust is built for that question.

It doesn't have an AI gateway or routing layer, and the logging is more basic than Helicone's real-time dashboards. The core is closed-source with some open components. You'd use this alongside another tool for observability and routing.

6. Arize Phoenix

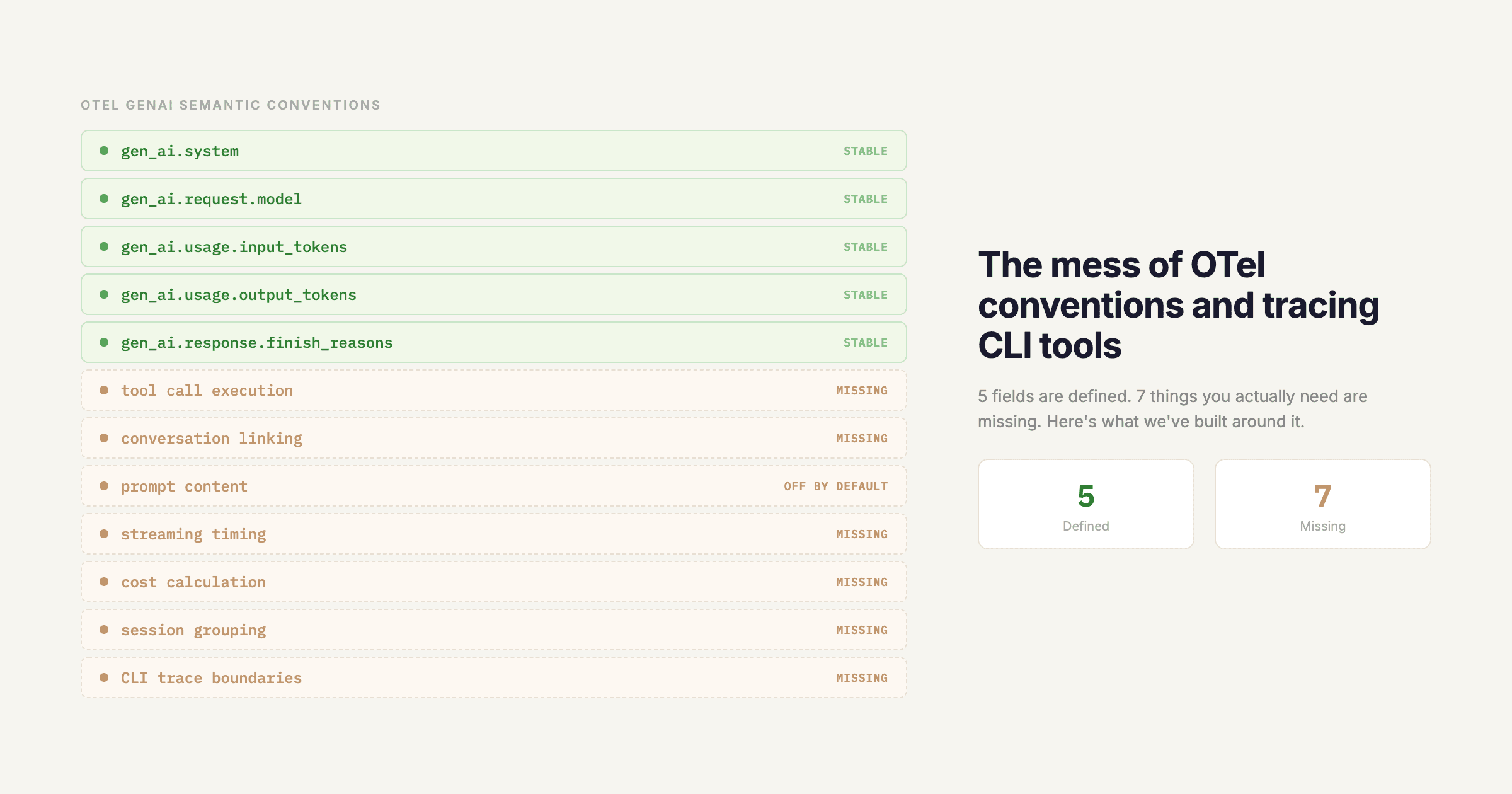

Best for teams already running OpenTelemetry infrastructure.

Arize Phoenix is fully open-source and built on OpenTelemetry standards. If you already have an OTel stack for application monitoring, Phoenix lets you add LLM traces to that same infrastructure. It also has solid drift detection and embedding visualization features.

No gateway, no caching, no rate limiting, no prompt management. The evaluation features exist but are less developed than the tracing side. Setup requires more infrastructure knowledge than most other options on this list.

Full feature comparison

| Feature | Respan | Langfuse | LangSmith | Portkey | Braintrust | Phoenix |

|---|---|---|---|---|---|---|

| AI Gateway | 500+ models | -- | -- | 200+ models | -- | -- |

| One-line setup | Yes | No (SDK) | No (SDK) | Yes | No (SDK) | No (SDK) |

| Request logging | Full | Full | Full | Full | Basic | Full |

| Cost tracking | Advanced | Basic | Basic | Advanced | Basic | Basic |

| Session traces | Yes | Yes | Yes | Basic | Basic | Yes |

| Caching | Semantic | -- | -- | Edge | Basic | -- |

| Rate limiting | Yes | -- | -- | Yes | -- | -- |

| Automatic fallback | Yes | -- | -- | Yes | -- | -- |

| Prompt management | Advanced | Yes | Yes | Basic | Yes | -- |

| LLM-as-judge evals | Yes | Yes | Yes | -- | Yes | Yes |

| Custom evaluators | Yes | Yes | Yes | -- | Yes | Yes |

| Human annotation | Yes | Yes | Yes | -- | Yes | -- |

| CI/CD eval gates | Yes | -- | -- | -- | Yes | -- |

| Custom dashboards | 80+ types | Basic | Basic | Basic | Basic | Yes |

| Self-hosting | Enterprise | Yes | Enterprise | Yes | Yes | Yes |

| SOC 2 | Yes | Yes | Yes | Yes | -- | -- |

| HIPAA | Yes | -- | Enterprise | -- | -- | -- |

How to decide

Pick Respan if you want a single platform that covers everything Helicone did, plus deeper evaluations and prompt management. Least migration friction for most Helicone users.

Pick Langfuse if open-source self-hosting matters more than having a built-in gateway.

Pick LangSmith if your stack is LangChain through and through.

Pick Portkey if routing, caching, and failover are your main requirements and you'll handle evals elsewhere.

Pick Braintrust if evaluation rigor is the priority and you'll use other tools for observability.

Pick Phoenix if you want LLM traces inside your existing OTel infrastructure.

What's happening with Helicone

Helicone stays live in maintenance mode. The team has committed to security updates, bug fixes, and new model support. But no new features are coming. The Helicone founders have also said they'll help customers migrate to other platforms.

There's no immediate rush, but the platform is no longer being developed. If you're still running production workloads on Helicone, it's worth evaluating alternatives now while the Helicone team is still around to help with the transition.

Getting started with Respan

Respan has a free tier you can use to evaluate the platform. Check out the getting started guide or reach out to our team for migration support.

pip install respan-tracingfrom openai import OpenAI

from respan_tracing.decorators import workflow, task

from respan_tracing.main import RespanTelemetry

k_tl = RespanTelemetry()

client = OpenAI()

@task(name="my_llm_call")

def call_llm(prompt):

completion = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

)

return completion.choices[0].message.content

@workflow(name="my_workflow")

def run():

return call_llm("Hello, world!")

result = run()Add the @workflow and @task decorators to your existing functions and every LLM call gets traced automatically.