Set up Respan

Set up Respan

- Sign up — Create an account at platform.respan.ai

- Create an API key — Generate one on the API keys page

- Add credits or a provider key — Add credits on the Credits page or connect your own provider key on the Integrations page

What is Respan Tracing?

The Respan tracing SDK uses OpenTelemetry under the hood. Add therespan_tracing package to your project and annotate your workflows with @workflow and @task decorators to get full trace visibility.

Setup

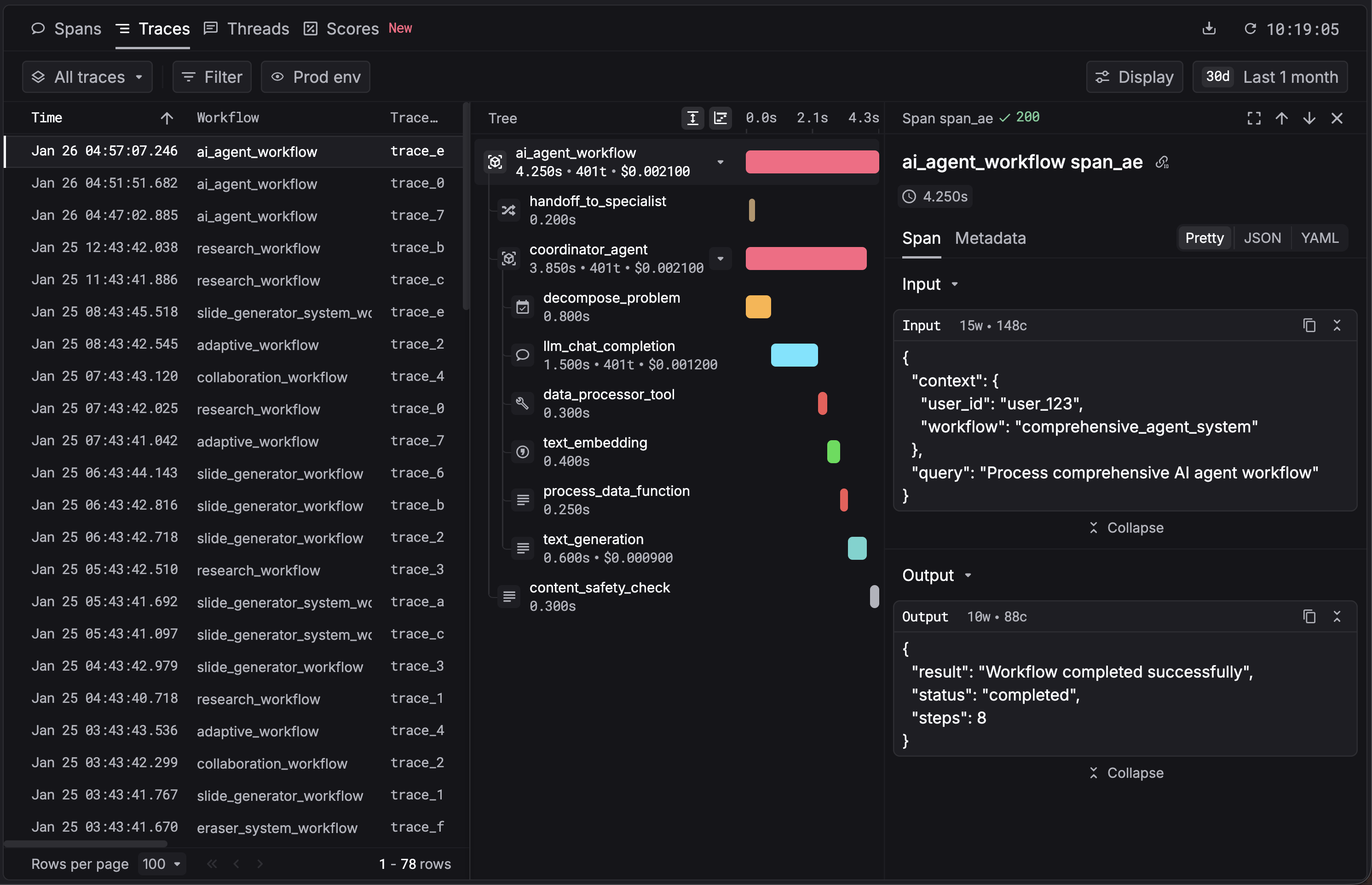

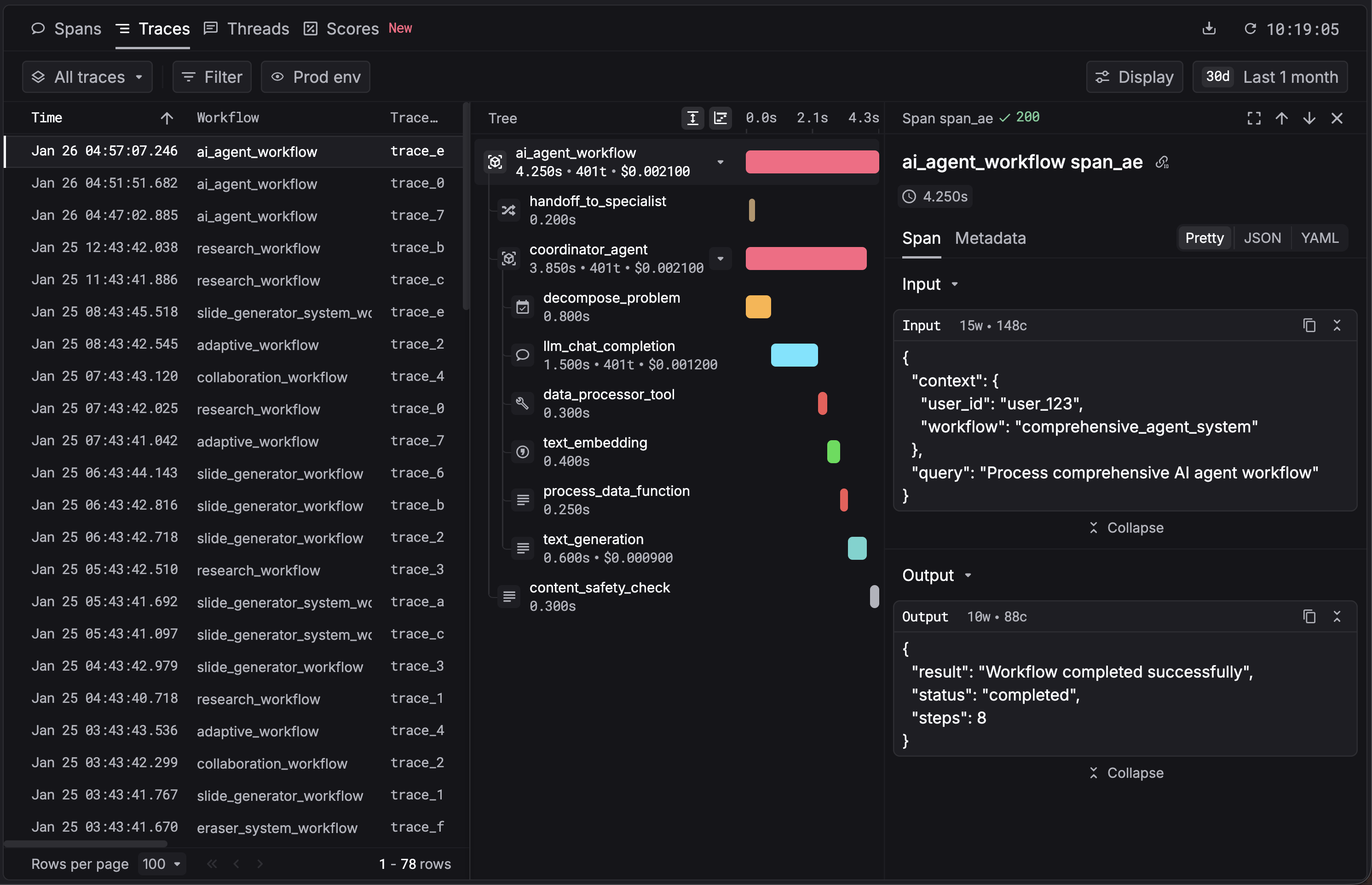

View your trace

Open the Traces page in the Respan dashboard.

Optional HTTP instrumentation: if you see logs like This is optional; tracing works without them. Add only if your app uses

Failed to initialize Requests instrumentation, install the OpenTelemetry instrumentations:requests or urllib3.Observability

With this integration, Respan auto-captures:- Workflows — each

@workflow-decorated function as a root trace - Tasks — each

@task-decorated function as a span - LLM calls — model, input/output messages, token usage

- Performance metrics — latency per step

- Errors — failed tasks and error details